I was supposed to buy one power-sipping Celeron N100 mini PC to replace the aging and inefficient AMD FX-8350 in my homelab. That is what I did at first, and it worked out great. My Intel N100 with a single 14-TB USB hard disk averages around 15 watts, while the FX-8350 with four 4-TB hard disks was averaging just over 70 watts. Not only is it more efficient, but the mini PC is very nearly as fast and has nearly double the usable amount of storage as the old server. How awesome is that?

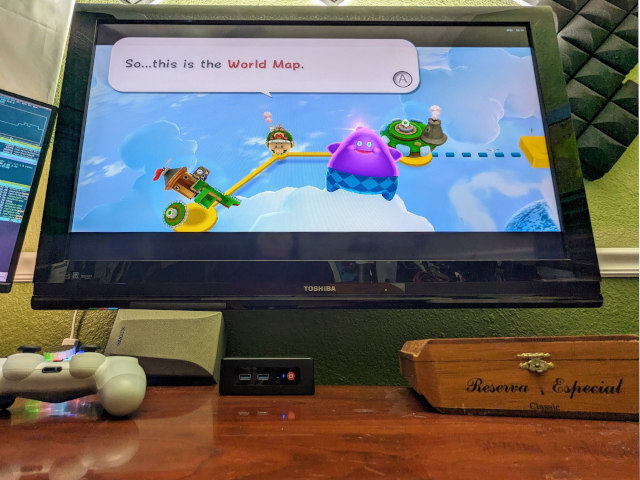

Then I bought a second N100 mini PC and decided to experiment with it before putting it directly into my homelab. I learned that the N100 has enough GPU to play some reasonably modern games, and it can emulate consoles up to and including the Nintendo Wii.

My Proxmox host mini PC with XFCE in its temporary home where I can easily plug and unplug USB devices while I continue testing

That’s what put this weird thought in my head. Why not load Steam and Retroarch on the host OS of one of my Proxmox nodes and leave it on the TV in the living room? That is as far as I got with the question because I don’t have wired Ethernet running to the TV, and I am not going to put one of my Proxmox nodes on WiFi.

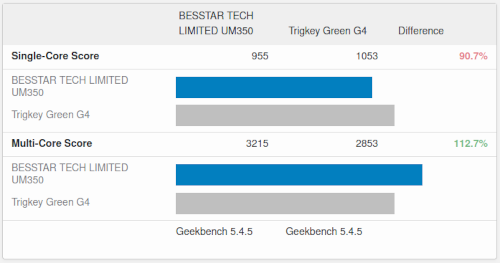

Then I bought a third mini PC with a Ryzen 3550H CPU. This older CPU roughly comparable to the Intel N100 in both power consumption and horsepower, but the Ryzen has an integrated GPU that is 3 or 4 times faster.

These two extra Proxmox nodes have both been sitting on my desk for enough weeks just waiting for me install them in their permanent homes that it getting another hare-brained idea was inevitable. I at least am hoping that my new idea is a good idea!

Installing XFCE on the Proxmox install was easy!

I just had to run apt install task-xfce-desktop. Aside from creating a non-root user account for myself, that was it. You will have to either reboot or manually fire up lightdm yourself, and you’ll be able to log into XFCE.

I don’t believe Proxmox uses network-manager, so the network controls in XFCE aren’t going to work. That is fantastic, because I wouldn’t want XFCE goobering up my Proxmox networking settings!

I had to add myself to the sudoers group and log back in before I could install Steam. That was easy.

I installed flatpak so I could use it to install OBS Studio with VAAPI. That was also easy, and it went quite smoothly.

It took me longer than I want to admit to remember the name of the project that replaced Synergy. I remembered that the modern fork of Synergy is called Barrier. Barrier was in apt, and it only took me a few minutes to get that working. Now I could move my mouse to the right edge of my monitor and start controlling the Proxmox host on my office TV.

One of our lovely friends in our Discord community pointed out that Barrier is also dead now, and that the new fork is called Input Leap. This was not in apt, so I am just going to leave my barrier setup running for now. It is locked down to my Tailscale network, so I am not worried about it being an older piece of network code.

Why on Earth am I doing this?!

I have two reasons for wanting to run a desktop GUI, and I have one extra reason for wanting one of my Proxmox nodes to live in my office.

The first was inspired by a recent hardware problem on my desktop PC. My PSU fan started making some noise, so I had to shut it down to work on that. I happened to have a spare power supply that was beefy enough to swap in its place while I replaced the power supply’s fan, but only just barely. This could have easily been a situation where I would have had to wait for Amazon to deliver me a new piece of hardware to get up and running again!

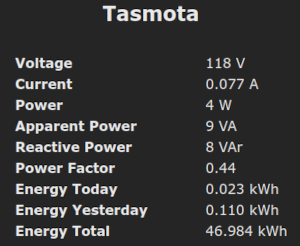

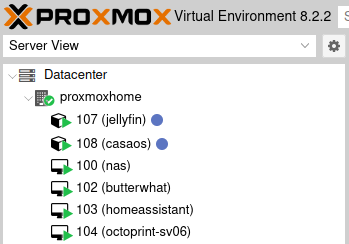

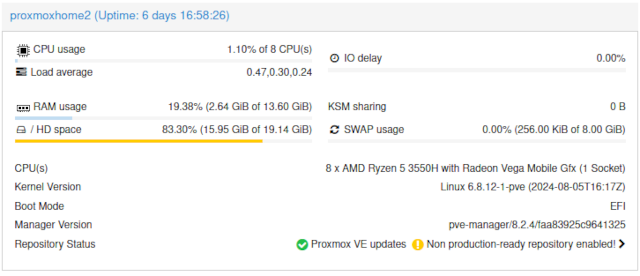

The Proxmox server is using less than 3 GB of RAM with the XFCE desktop running, OBS Studio running, and the Octoprint container running

I thought it would be nice to have a spare mini PC with a desktop GUI at my desk that I could use in an emergency. I could quickly plug in my monitor, keyboard, and mouse so I could use Discord, Emacs, and Firefox. That is plenty of functionality to keep me chugging along, and it is way more comfortable than using my laptop.

This idea all by itself seems like a good enough excuse to try this out.

If you’ve been wondering why I wouldn’t attempt to pass the GPU through to a virtual machine and isolate the desktop GUI inside, here is the first part of the explanation. When my computer stops working, I want to be able to plug my important peripherals right in and use them. I don’t want to be futzing around with passing through all the appropriate USB devices. I wouldn’t even have a good place to sit down and do that work!

A dedicated OBS streaming box might be a nice thing to have!

I am running out of USB 3 ports on my desktop PC. Some of my video gear is plugged in via USB hubs. Sometimes I need to disconnect and reconnect my USB to HDMI adapters to get them to work correctly. Even just reducing the number of USB cables that have to run toward my desktop computer will be an improvement.

I have my podcasting camera mounted upside down. This lets me see my Sony ZV-1 vlogging camera’s little display while pushing the lens as close to my monitor as possible. The USB to HDMI dongle that I use doesn’t allow for simple flipping and inverting via the V4L2 driver, so I have to pass it through something like OBS Studio to transform the camera.

Having a dedicated streaming mini PC to handle my cameras, encoding, and streaming seems like it’d be handy.

The latency and frame rate of the OBS Studio output from my mini PC over VNC are both terrible, but it is more than adequate for making tweaks to the OBS Studio settings!

I am able to encode 1080p HEVC video using the GPU via VAAPI. It only uses around 40% of this tiny iGPU’s horsepower, but it has had weird encoder hiccups during the first couple seconds of recording. I suspect it just isn’t ramping up the speed of the GPU quickly enough, but it is fine once it settles in. Worst case I have to dial that back from h.265 to h.264.

I haven’t decided exactly how I am going to tie this into my recording and streaming setup, but I am excited to see that this little box is more than capable of handling these tasks.

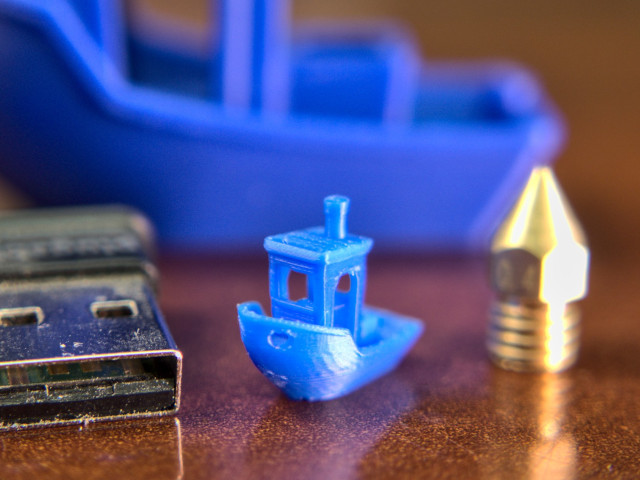

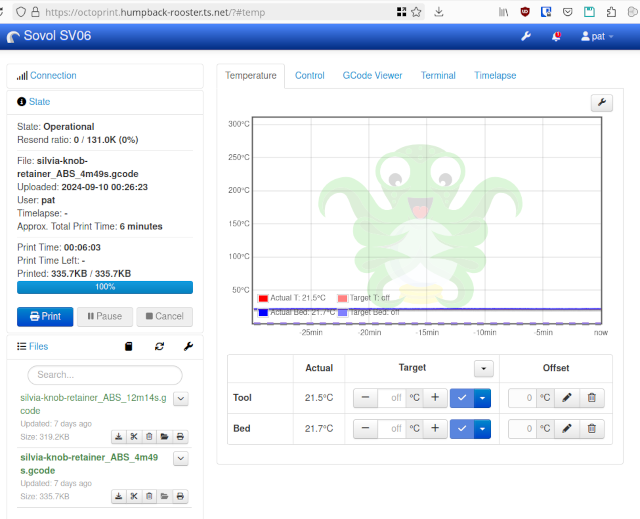

I simplified my virtualized Octoprint setup

A lot of people run Octoprint on a Raspberry Pi. I’ve always run it in a virtual machine on my homelab server. When I moved my old FX-8350 homelab server out of my office to quiet things down in here, that meant my 3D printer was now 60’ away from the Octoprint virtual machine, and I surely wasn’t going to run a 60’ USB cable across my house!

I brought one of my old OpenWRT routers out of retirement and installed socat. That let me extend a virtual serial port across my network so I was able to keep using my Octoprint instance. It also gave me an extra access point to add to my 802.11r WiFi roaming network, which was a nice bonus.

The socat setup worked most of the time, but every once in a while I would have to restart the socat processes. This didn’t happen often, but these days I only fire up my last remaining Octoprint server once every month or two. The socat process now almost always needs to be kicked before I can start printing.

I was able to plug my Sovol SV06 directly into my new quiet Proxmox server in my office. I did have to cheat, though. If I wanted to run the cable along the wall, I would have needed a 30’ USB cable to make that journey. I decided to run a cable directly across the carpet from my 3D-printer stand to my desk, so there’s 32” of USB cable on the floor with a temporary piece of duct tape helping to make sure I don’t snag it with my foot.

This cable across my floor is a terrible solution, but the Sovol SV06 will definitely be my last printer that is slow enough or old enough to be used with Octoprint. I don’t have a timeline for retiring it, but it is definitely going to happen. That means this short span of USB cable across my office floor is temporary. We just don’t know how temporary!

- Can I Use Octoprint with a Networked Serial Port?

- Marlin Input Shaping on the Sovol SV06: Three Months Later

Is this a good idea?

Should you do this with one of your production Proxmox servers at your company? Absolutely not. Should you do this at home? If you know what you are doing, I would say that it is worth a shot. I am only eating up 2.6 gigabytes of RAM having a desktop session logged in with OBS Studio running. That will be less than 10% of the available RAM in this machine once I am finished shuffling SO-DIMMs around.

Way back when I built my arcade cabinet, I started telling people that the best computer in the house to use as a NAS is the one that already has to be powered up 24 hours a day. An arcade cabinet is way more fun when you can just walk up to it, hit a button, and immediately start jumping on goombas.

If you’re already paying a tax on your electric bill to keep one computer running all day long, why not give it one more task instead of buying another machine and paying that same tax again?

The important thing to bear in mind is that you may be tying the uptime of these tasks together. When you can’t play Dead Cells because your GPU has somehow gotten itself into a weird state, and the only way to fix it is a reboot, then all the virtual machines on that Proxmox server will also wind up being restarted.

If that means your PiHole VM and Jellyfin container have to be stopped, then nobody in your house will be able to access websites, and someone’s movie stream may stop. Nobody is going to lose any sales, but it is up to you to decide how much money it is worth to avoid this situation.

Conclusion

This little mini PC project has proven to be fun, and it was as easy to get a desktop GUI running on Proxmox as I had hoped! Having a single power-sipping mini PC fitting into two or three roles at the same time seems like a good value, and I am excited to see just how much use I get out of these tertiary use cases.

Odds are pretty good that I will never need to use this Proxmox mini PC as an emergency workstation, but I will feel better knowing that it is available. I do expect to get some use out of the video recording and streaming capabilities. I have some work to do there because I want to be able to use the output from OBS Studio on the mini PC as a virtual webcam in Chrome on my desktop when I connect to Riverside.fm.

What do you think? Do you think it is silly to run a GUI on a server? I would usually be the first person to think so! Are you already doing something similar with one of your Proxmox servers? Or are you running your emergency GUI in a virtual machine? Does it seem like a good idea to overload one of my Proxmox nodes as a video capture, encoding, and streaming machine? Tell me about it in the comments, or join the Butter, What?! Discord community to chat with us about it!

- Choosing an Intel N100 Server to Upgrade My Homelab

- Do All Mini PCs For Your Homelab Have The Same Bang For The Buck?

- I Added A Refurbished Mini PC To My Homelab! The Minisforum UM350

- It Was Cheaper To Buy A Second Homelab Server Mini PC Than To Upgrade My RAM!

- Mighty Mini PCs Are Awesome For Your Homelab And Around The House

- My First Week With Proxmox on My Celeron N100 Homelab Server

- Topton 2-Bay R1 Pro NAS review at Brian’s Blog

- Using an Intel N100 Mini PC as a Game Console

- When An Intel N100 Mini PC Isn’t Enough Build a Compact Mini-ITX Server!